AI can now find zero-days

But this changes little. You didn't really need a zero-day to cause a ruckus.

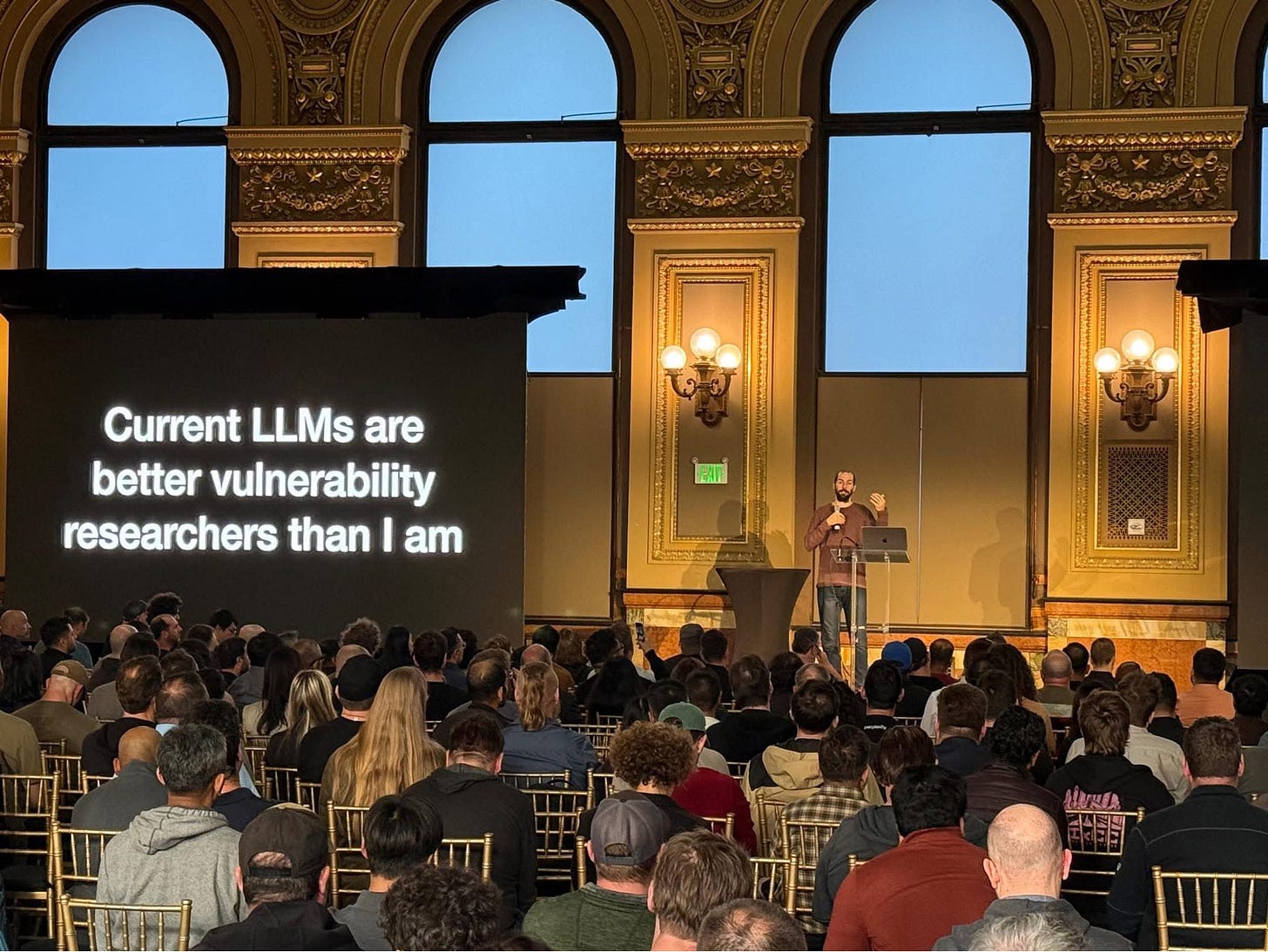

Last week I started with part 1 of the Telegram series. Of course, I will wrap that up. Today, however, I felt compelled to write about Nicholas Carlini’s presentation (currently at Anthropic) since it shook the tech zeitgeist.

Essentially, anybody can now prompt a model to find critical software vulnerabilities. According to keyboard pundits, we are about to be in a world of hurt. I’m willing to be a contrarian on this point and argue that nothing will fundamentally change between attackers and defenders. Finding all these vulnerabilities changes little.

Context. In February, Anthropic’s Red Team published a blog post demonstrating how Claude can, on its own, find a ton of vulnerabilities. A week ago, Nicholas gave a talk at Unprompted showing live how Claude found “zero-day vulnerabilities” in Ghost, the open source version of Substack that I didn’t want to bother setting up, and in none other than one of the most publicly scrutinized codebases: the Linux kernel.

A zero-day vulnerability is a fancy way of saying a vulnerability that no one has seen before. A vulnerability in computer security means that some piece of software has a flaw that can be exploited to do bad things. And, because no one has seen it before, defenses do not yet exist. Imagine, for example, you find a flaw in a government’s server that lets you read all the employees’ information. Or imagine you find a flaw in a company’s systems that allows you to lock all computers down and ask for money to unlock them. Or imagine you find a flaw on peoples’ phones that lets you spy on all their texts, calls, emails, etc. Because of all these crazy things that you can do with never-seen-before software vulnerabilities, protecting against them is really important.

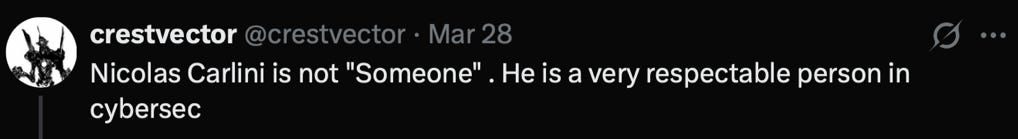

Finding 0-day vulnerabilities was really hard, especially if it affected software that is used in a ton of devices, like the Linux kernel or iOS. Security nerds around the world dreamed of the day they would find one of these, not only because security agencies are constantly searching for top hackers, but because you’d immediately achieve tremendous aura that will have people online arguing about how you are not “some” security researcher.

So when Claude found a decently important vulnerability in the Linux kernel (an impressive feat for mere mortals that do not dream of electric sheep), doomsday predictions began. The standard conclusion is that we are entering a period where anybody will be able to find critical vulnerabilities and that we should all be tremendously worried.

I think this is mostly wrong because of two core reasons. First, attackers and defenders’ capabilities are more symmetric than painted in the current narrative. Second, for most people, the thing that prevents you from exploiting computer systems is that you could be sent to jail for a long time.

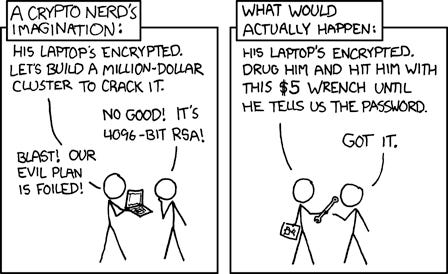

To explore these arguments, it’s useful to think of the three broad groups of attackers we might face. On one hand, you have what we like to call a “state actor” (e.g. CIA, NSA, Mossad, MI6, you get the point). You can safely assume that defending against these groups is mostly futile unless you are another state actor. If the CIA wants to get into your laptop, they can leverage a wide variety of tricks, one of which may include a zero-day exploit. And by the way, if they needed one before Claude, they could’ve just bought one, for a few million dollars––pennies on their balance sheet. Even simpler, they can also just come and take you and your laptop away.

Then, there are sophisticated for-profit criminal groups (hackers with black hoodies a la Mr. Robot). They are technically proficient but will most likely not implant secret chips at the factories where phones are made. Lastly, you have normoids (a.k.a. unsophisticated actors, such as your average Twitter tech influencer). They are proficient in vibe coding and logging into their router. Congratulations you have just done something called “threat modeling.”

Ok, back to the counterargument: if language models are good at finding vulnerabilities, they are good at the same reasoning that underlies patching them. Offense and defense are not drawing from different capability pools. They are running the same models against the same code. The question is not whether AI helps attackers––it obviously does––but whether it helps them more than it helps defenders. There is no reason to think the answer is yes. If anybody can now use Claude to find vulnerabilities to exploit, then anybody can now use Claude to find vulnerabilities to patch. Saying AI only helps attackers is like saying faster cars only help bank robbers.

The first objection against the claim above is that patching (i.e., fixing vulnerabilities) is harder than finding them. A common motto is “defenders need to be lucky every time, attackers need to be lucky only once.” On top of that, attack verification is cheaper than patch verification. When you are patching software vulnerabilities, you need to make sure you don’t accidentally mess something else up. When creating an exploit, you just need to find one thing that works. But is it that simple?

The cost of verification is also high for a successful attack campaign. Exploitation is but one step in a multi-step operation. You broke into a server, now what? If your goal is to make money, you need to find something valuable, extract it, cash out, etc. And you need to do all of this without fucking up. Because if you fail to cover your tracks and you do not fall under the category of state-sponsored actor, it’s federal prison for you.

This last point deserves more attention than it typically gets. The security conversation almost always focuses on technical capability as the binding constraint on bad behavior. But think about what actually kept script kiddies in check for the last twenty years. It wasn’t that Kali Linux was too hard to use, or that Metasploit required a PhD––two tools you can download and play with right now. It was that the FBI has a long memory, long arms, and federal prosecutors have a fondness for making examples. The casual and the not-so-casual criminal attackers newly empowered by AI still fear the law; 3D-printed guns didn’t make everybody a killer. Non-state attackers also still have to figure out how to not be idiots about attribution, lest they get extradited to the US to pay their dues.

The natural objection is to move the goal posts. “Claude is good at exploitation now but in the future it will handle the whole kill chain: reconnaissance, exploitation, exfiltration, hell, even cashing out!” Maybe. But if that day comes, the same model will also be able to patch bugs without wrecking the rest of the codebase––a lower bar to clear than the aforementioned. The capability argument cuts both ways or it cuts neither.

But all of this assumes we are transitioning from a world that was reasonably secure into one that is exploitable. The truth is the state of software security was already bad for everyone, not just for people facing nation states. Before AI, software was already outdated, unpatched, and vulnerable. People were falling for phishing links, writing passwords on sticky notes, plugging in random USB drives. Even “naïve” teenagers had access to enough tools to target high-ranking US government officials. You didn’t need a zero-day to make a mess. Arguably, there were already more vulnerabilities than anyone knew what to do with.

None of this is to discount the work that Anthropic's Red Team is doing. An autonomous agent finding and exploiting 0-days is something that, when I was an undergrad researcher, seemed so distant. It is truly remarkable. But capability claims without a threat model just result in fearmongering, the type of fodder CISOs and security startups love to feed because peddlers of software security are always incentivized to paint a bleak future; a future only they and their products can fix.

AI can now automatically create zero-days? So what, most people’s password is still their dog’s name.

This is peak pragmatic realism and it gives me a sense of peace