The performance was always the product

AI didn't invent fake influencers––it just made them cheaper to produce and harder to ignore.

AI influencers are here. This was entirely expected. Even before generative AI was a thing, we (meaning us, humans) had been toying with the idea of virtual/artificial personas, in social media and beyond, just look at Gorillaz or Hatsune Miku. But of course, much of the Internet is for prurient uses. So, it seemed like only a matter of time until we saw AI influencers used for explicit content on platforms like OnlyFans or Fanvue. Recent reporting paints these AI sex workers as a new phenomenon. But in reality “fake” accounts selling “fake” adult content were already here.

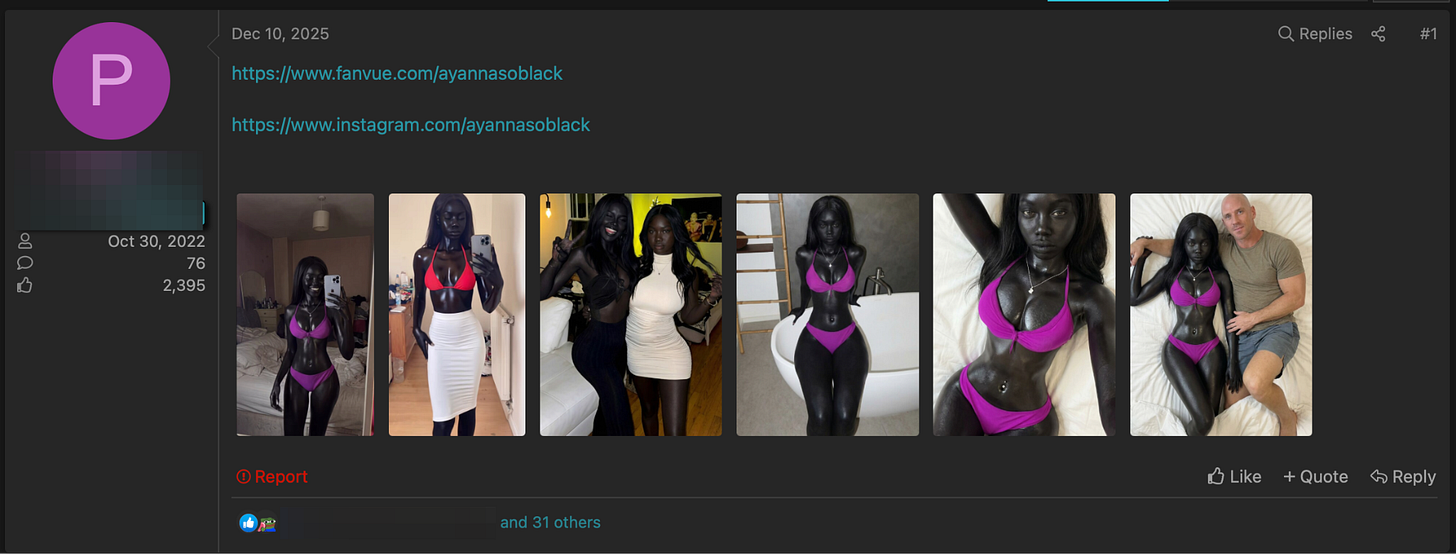

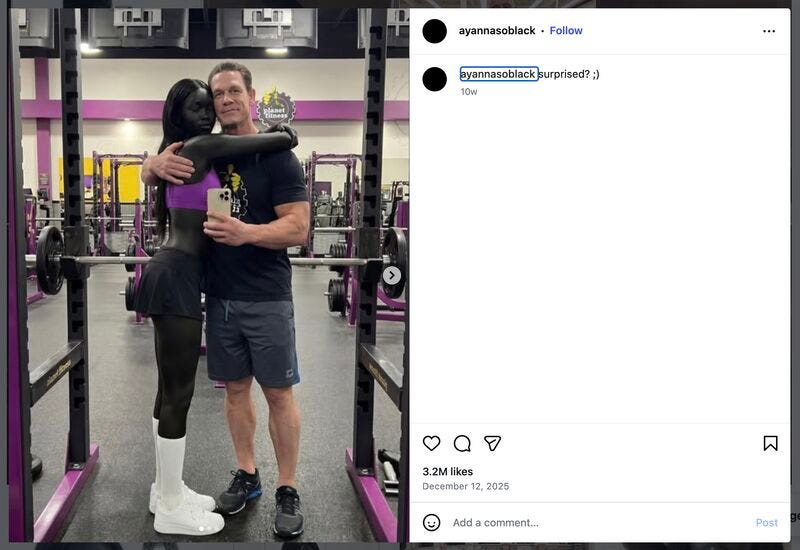

One of the most notable recent cases of a fake OnlyFans model going viral is AyannaSoBlack (now banned from Instagram). A picture of her and former-WWE-star-turned-actor John Cena at Planet Fitness accumulated hundreds of thousands of digital ogles across social media. Similar to other adult content creators, her profile invited you to know her more intimately for a low price. People were so enthralled by her allure that requests for her adult media quickly surfaced in adult piracy forums. What turned this into a fiasco was the collective realization (and disappointment) that she had been artificially generated by matrix multiplication. The account purposefully intended to deceive users. For example, the model in question would answer in Q&A style that her fascinating dark pigment was due to South Sudanese genetics. As per modern internet etiquette and morality (as well as OnlyFans guidelines): undisclosed AI use is a no-no.

Now, the first thing I want to dispel about this whole thing is that this phenomenon is somehow new. A man pretending to be a woman online for profit the oldest trick in the book.

When I was barely a teenager, I discovered MMORPGs. WoW and Ragnarok were my go-tos. While these games were instrumental in my acquisition of the English language, they also demanded copious amounts of hours doing repetitive tasks to make in-game money and to obtain cool shiny items. However, if toiling around those universes collecting minerals and such was not your calling, you could do what my friend N did: he would create a female character, befriend presumably male players, and try to convert them into patrons. And just like that, N—a then-13-year old from the middle of nowhere South America with a limited yet expanding English vocabulary—adeptly demonstrated why the FTC’s statistics on romance scams make complete sense (and, unfortunately, the stats about online grooming).

If N had not gone to college he would’ve certainly taken part in the many communities who realized that they could pretend to be women online for monetary gain. As I hinted at in the previous paragraph, romance scams are one flavor of this approach. You receive a message from an unknown number who wants to befriend you. They lead you to a Whatsapp or Telegram chat with an attractive person as a profile picture. They then try to convince you to part ways with your money with a variety of techniques, ranging from persuasion to (s)extortion. Scammers are allegedly pulling more than a billion dollars with playbooks like this.

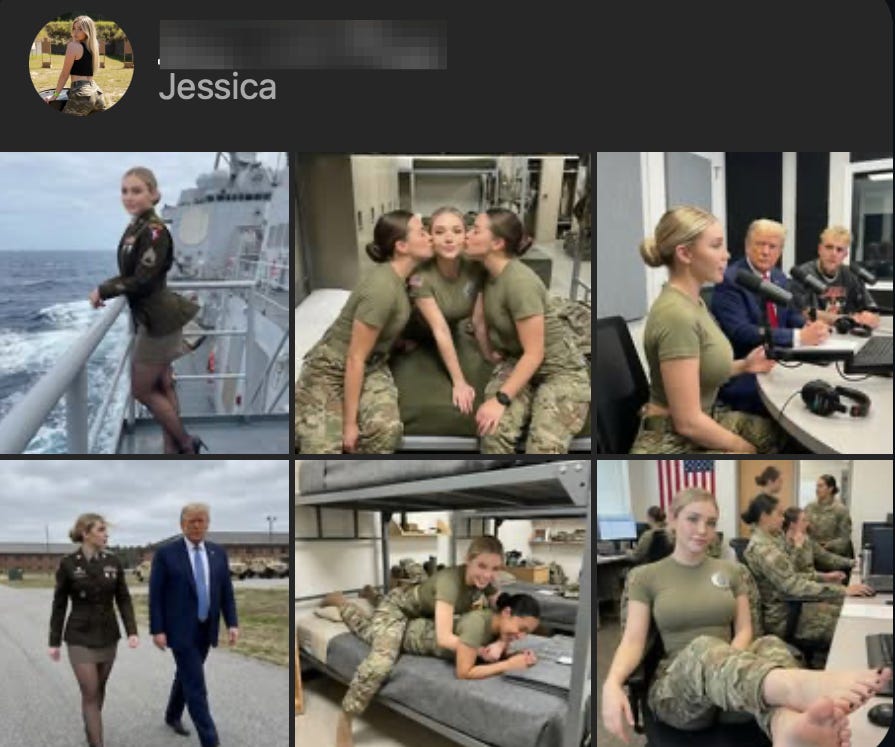

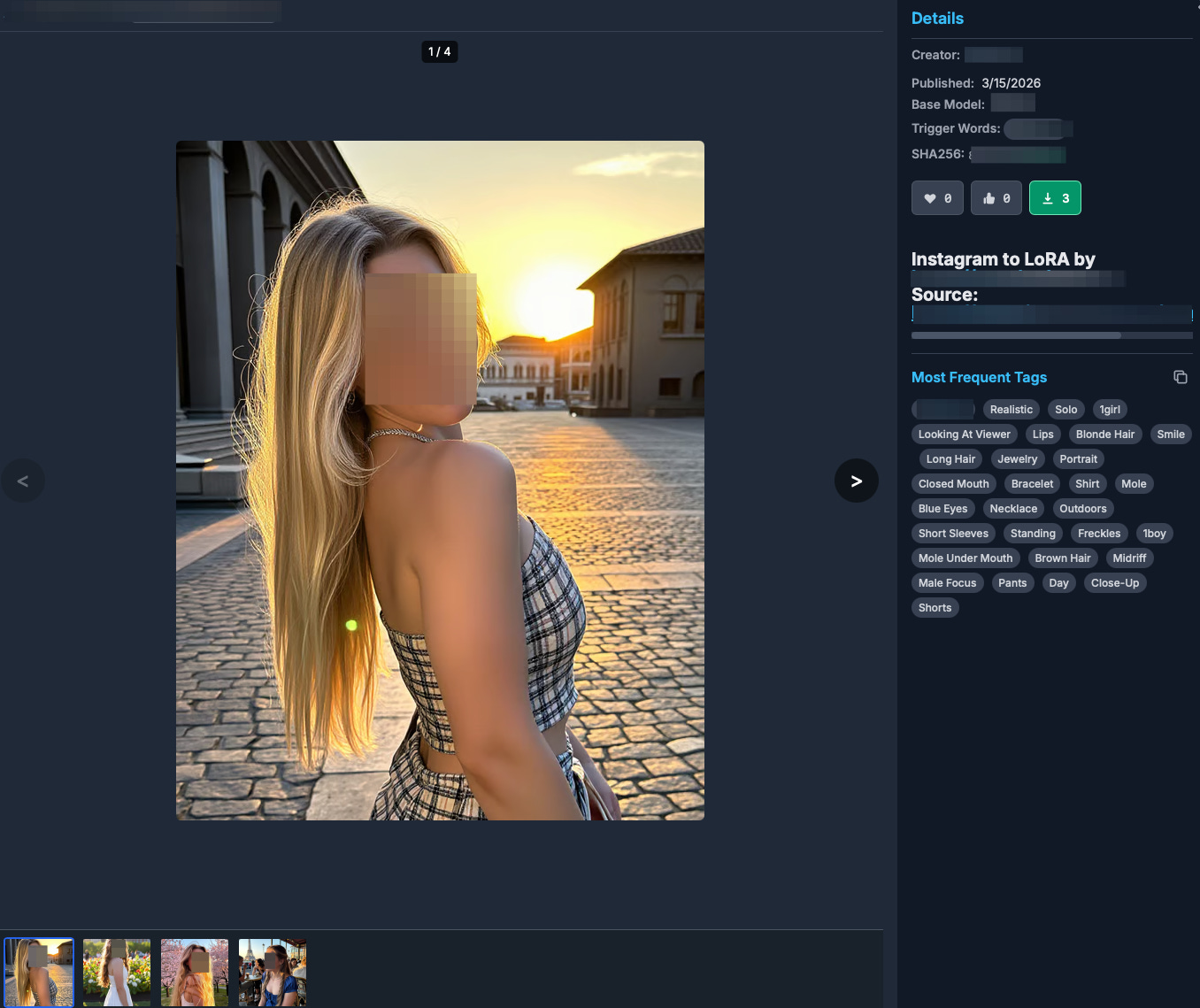

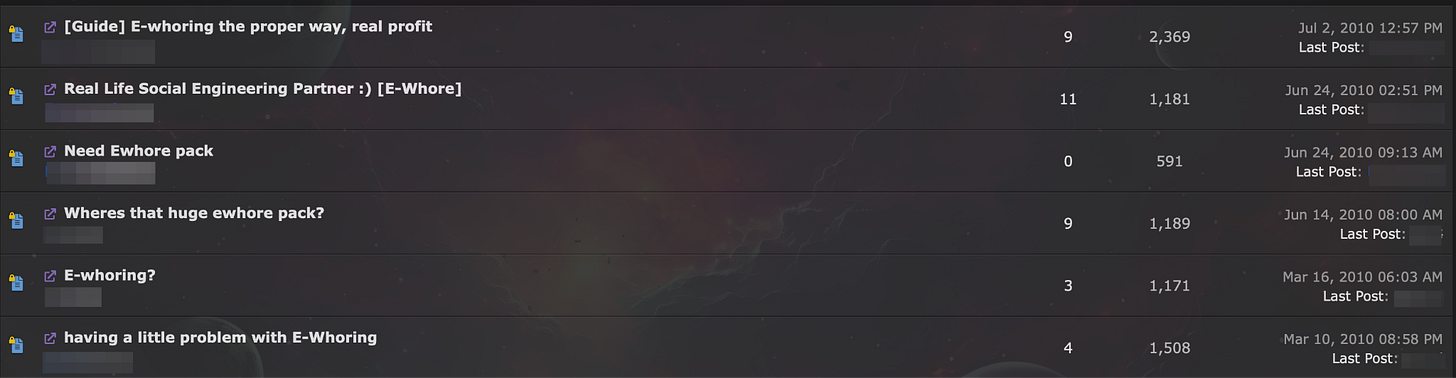

Whereas in a traditional romance scam you build and leverage an emotional bond to extract value out of a victim, a parallel tactic involves convincing the victim to buy your spicy pictures. In 2019, Hutchings and Pastrana published a paper on what is known online as “eWhoring”: tricking people into buying adult content that you did not create. The first thing an aspiring eWhoring entrepreneur needs is the right type of content, or a “pack.” Procurement is the act of finding “packs” of pictures about the person you want to impersonate. Ideally, you want someone with a wide range of pictures (often taken from social media) who is not very known (little followers) and for whom you also have access to nude or sexual media (often obtained nonconsensually). This combination of attributes was challenging to achieve, so people in online forums also specialized in collecting and selling these packs. With a suitable pack you could begin generating leads and closing sales.

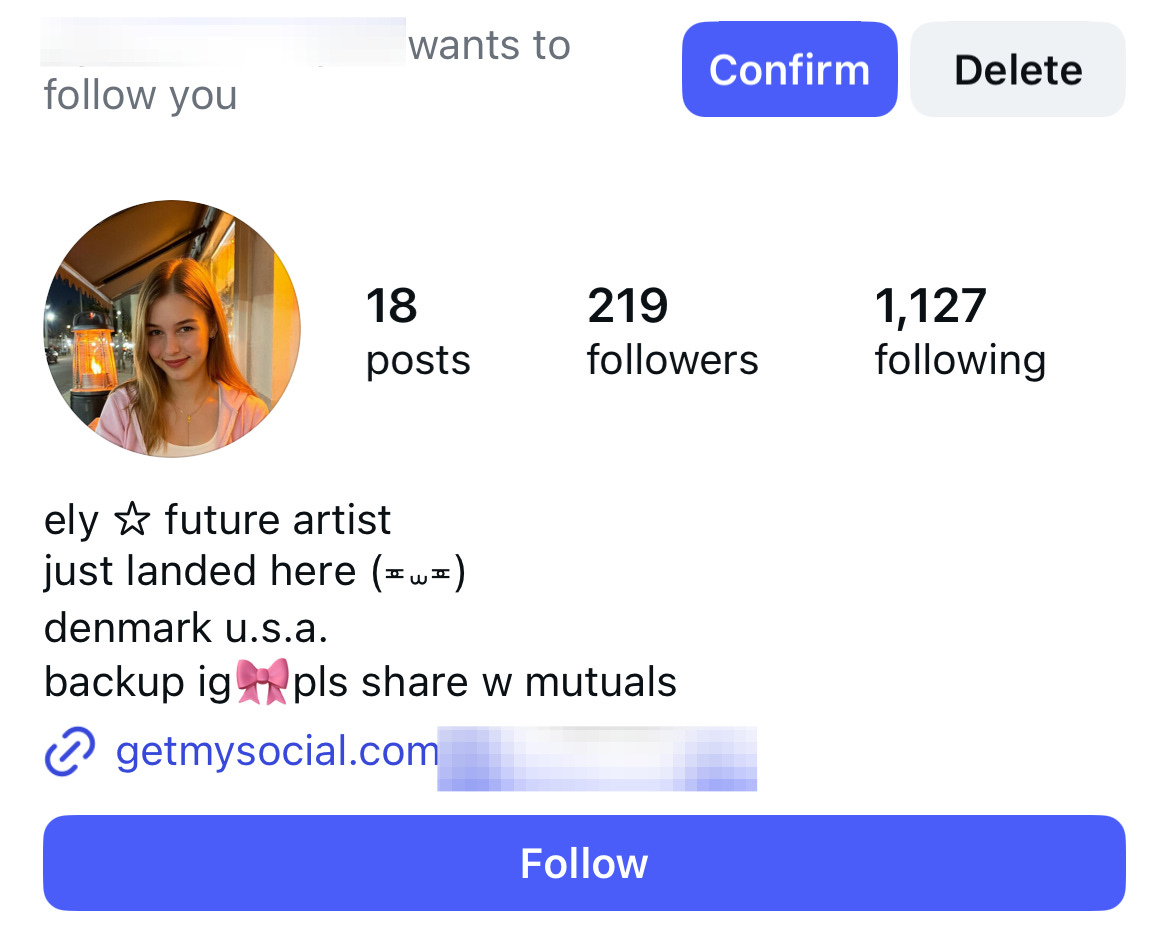

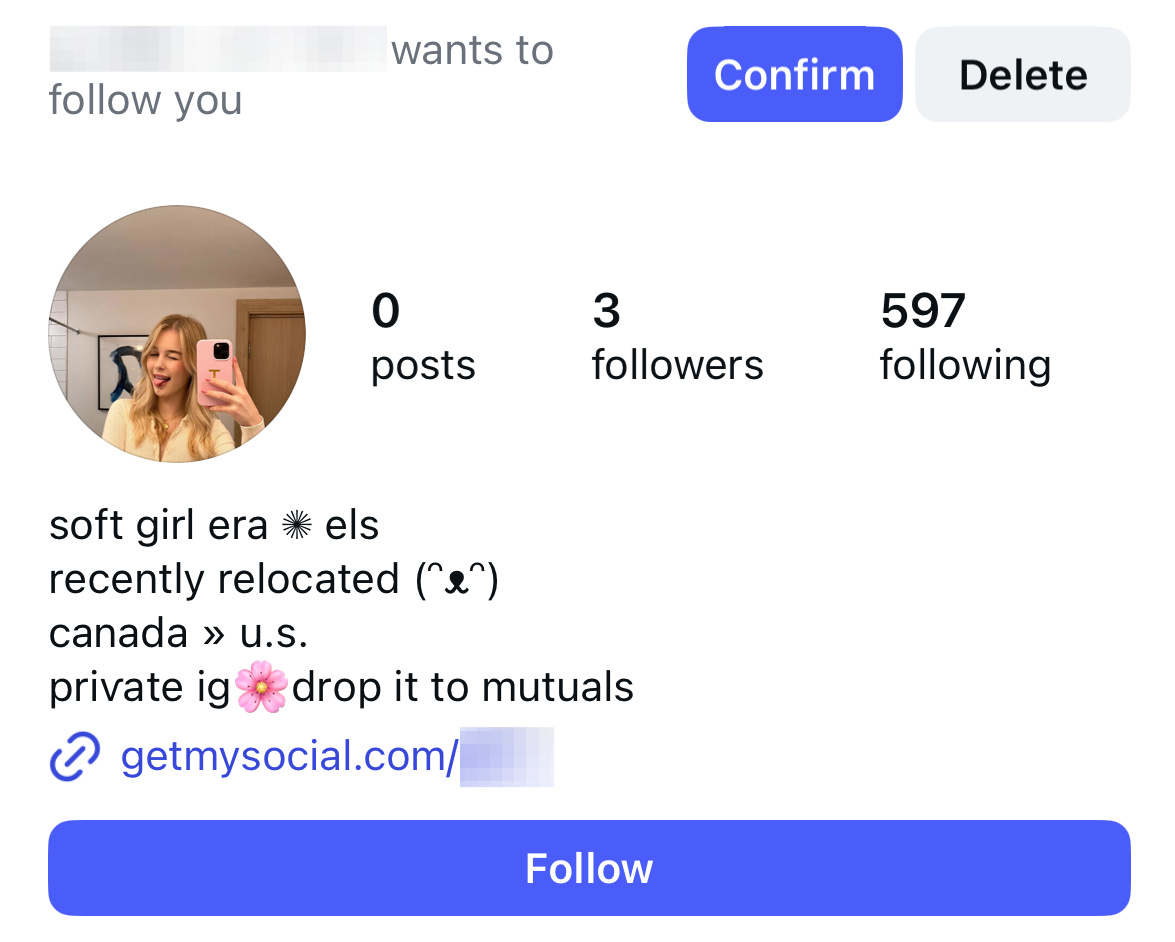

For the past 16 years, the playbook has remained relatively consistent. An account follows you on a social media platform (e.g., Instagram), and proceeds to persuade you to buy “premium” content. These sales were more ad-hoc, through something like Venmo, or in more sophisticated cases a landing page with a credit card terminal, similar to those that tried to sell you eBooks.

The advent of OnlyFans (OF) introduced a new paradigm for adult content creation, both for legitimate content creators and otherwise. OF made procurement substantially easier because it centralized a vast repository of explicit images. To create a pack, all you needed to do was to find an OF model, their social media account, and you could begin a copycat of them without them ever finding out. OF’s infrastructure made monetization easier. And lastly, their opaque external visibility made it harder for people to identify copycats: a model from Mexico would have a hard time knowing that a teen from the other side of the world was running an account with their stolen pictures.

AI has now similarly re-upended the procurement paradigm. Any person can now be a victim because AI models allow the creation of non-consensual images, explicit or otherwise. I can run an Instagram account using someone else’s legitimate pictures and sell photorealistic explicit media of them on OnlyFans. Or, I can even take it up a notch and run a completely synthetic Instagram and OF account leveraging a pre-trained AI model on their likeness. I can use this AI model to post pictures of them at school, at the beach, posing with John Cena, or performing whatever sexual acts my followers are willing to pay money for. On top of that, you can hire gig workers to help you out. To borrow a word that technoidealists love to use, AI has now democratized victimization.

When AyannaSoBlack was exposed, people were not outraged that they had paid for adult content. They were outraged that the intimacy had been fabricated, as if the intimacy was ever real. On top of that, keyboard doomers treated this phenomenon as some new dark AI-enabled innovation. But a man pretending to be a woman online for profit is, as I noted, a discovery that even a 13-year old boy makes and exploits. Performances are powerful: the best adult content creators know this. They create micro-universes in which their subscribers can dream—for 5, 10, or more dollars a pop. We are just now entering a universe in which this parasocial fantasy, this performed desire, can be executed on an office floor somewhere in Eastern Europe… wait, we were literally already there.

Adjacent Reading

How AI-generated Influencers Exploit Celebrities to Sell Synthetic Nudes

OnlyFans Models Are Using AI Impersonators to Keep Up With Their DMs

Notes:

I use “OnlyFans” as a blanket term for similar platforms, including Fanvue.

Who was N

As always a fun read