The Telegram Ecosystem (Part 2)

The most sensational things on Telegram are usually the least verifiable. The verifiable things are damning enough.

News about Claude Mythos dropped this week, along with their slow rollout with Project Glasswing. While this is all very interesting, this is not what we are going to talk about this week––though do check out my post from last week which covers those topics. Instead, we are going to do part 2 of our Telegram series.

Yesterday, WIRED published a piece based on a new report by AI Forensics documenting abuse on Telegram. The headline: men are buying hacking tools to use against their wives and friends. The report behind it is a serious piece of work: 57 pages, 2.8 million messages analyzed across 16 Italian and Spanish-language Telegram groups, collected over six weeks. AI Forensics is a credible organization doing important research.

If you read Part I of this series, nothing in the report will surprise you. Of course Telegram hosts groups where people share non-consensual images. Of course bots play a central role in automating and scaling operations. Of course the groups regenerate hours after being shut down. Telegram has the infrastructure for all of these things. The report documents real harm happening to real people, and the finding that most victims are ordinary women targeted by people they know––partners, ex-partners, acquaintances, colleagues, and classmates––is consistent with everything the academic literature has established about image-based sexual abuse for the past decade.

Where the report runs into trouble is not in what it found, but in what it claims to have found.

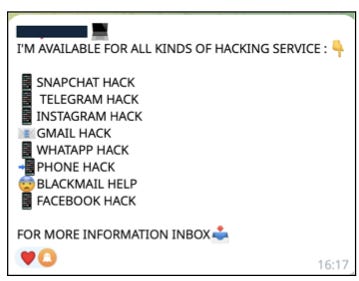

Consider the hacking services. The report documents over 18,000 references to spying or surveillance tools, and 441 references to hacking services. Screenshots show users advertising “professional hacking on commission,” promising access to phones and social media accounts. The presentation is alarming. It is also, to anyone who has spent meaningful time in these spaces, mostly theater.

The people offering to hack your wife’s iPhone for $50 are not sitting on zero-day exploit chains. They are not former NSA operators moonlighting on Telegram. They are, overwhelmingly, scammers who can take your money because you will have nobody to complain to. The misspellings are a tell. The pricing is a tell. The vagueness of the technical claims is a tell. Real offensive cyber capabilities are not sold in Telegram groups with open enrollment. At the very least, you would hope your hacking contractor uses Signal.

This does not mean spyware is absent from Telegram. Commercial stalkerware is real, genuinely harmful, and I would not be surprised if there are groups where you can find this software and help deploying it. But the report does not clearly distinguish between a scammer posting a menu of hacking services he cannot deliver and a channel distributing actual surveillance software. The screenshot gets the same evidentiary treatment either way.

The same problem appears with the report’s most alarming claims about child sexual abuse material (CSAM). The report documents folders and offers that claim to contain CSAM. The claims are real, the groups exist, the advertisements exist, the folders are labeled exactly as described. But a lot of what gets advertised in these spaces is also bait: marketing designed to extract payments. The folders can say whatever but this is no guarantee that the content exists or that the advertiser can fulfill the promise. This is not speculation on my part; it is a pattern that anyone who has done sustained fieldwork in underground Telegram communities recognizes. Scammers impersonate the flashy content (usually the worse stuff) because the flashy content commands the highest prices and the most desperate buyers.

I want to be precise about what I am and am not saying. I am not saying CSAM does not exist on Telegram. It does. I am not saying these groups are harmless. The advertisements themselves normalize the content they describe, and some percentage of what circulates is genuine. I am saying that a methodology that treats advertisements as equivalent to verified content will systematically misrepresent the scale and nature of what is actually being transacted. The same thing happened with darkweb marketplaces. People saw that weapons and hits were advertised on these markets, thought it was a common thing, and fell for it.

Here is the distinction that matters: some evidence on Telegram is self-authenticating, and some is not.

When someone posts a non-consensual intimate image in a group, the posting is the harm. The image is real, the victim is real, and the damage is done the moment the file is shared. There is no gap between the advertisement and the product. A screenshot of this is sufficient evidence because the evidence and the harm are the same object.

When someone posts a listing advertising hacking services or CSAM access, the posting is not the harm––or at least, not the harm being claimed. The harm would require the service to be real and the content to be genuine. A screenshot of a listing is evidence of demand. It is evidence that someone believes there is a market for this. It is not evidence of capability or supply.

The report’s strongest findings (the non-consensual image sharing, the nudification bots, the doxxing) are all cases where the posting is the harm. These findings are methodologically sound because they do not require the researcher to verify anything beyond what is visible. The report’s weakest findings (the hacking tools, the CSAM claims, the spyware advertisements) are all cases where the posting is an advertisement for something that may or may not exist behind it. The methodology does not change between these two categories, but it should.

This brings me to a tension I cannot resolve but it’s pervasive in this type of research.

The report’s unreliable evidence points at real problems. Telegram likely hosts spyware distribution. Telegram does host CSAM. The fact that most of the specific listings documented in this report are unverified (and possibly scams) does not mean the underlying harms are fictitious. So if a policymaker reads this report and concludes that Telegram needs stricter oversight––which is AI Forensics’ explicit recommendation, calling for Telegram to be designated a Very Large Online Platform under the DSA––they have arrived at a defensible conclusion through partially indefensible evidence.

Similarly, the WIRED headline may be true, but it is not necessarily supported by the evidence it points to. Is that acceptable? Scientifically, no. Practically, I do not know.

Policy built on misunderstood evidence can misallocate enforcement resources. If regulators believe Telegram is primarily a marketplace for sophisticated hacking tools, they could prioritize interventions for a threat that barely exists while ignoring the one that does: the low-barrier, high-volume sharing of non-consensual intimate images by ordinary men targeting ordinary women. But demanding perfect evidence before acting means never acting, because Telegram’s opacity is precisely what makes perfect evidence impossible.

We do not need to prove that Telegram hosts sophisticated spyware operations to have grounds for action or moral panic. The verified findings––that thousands of people are sharing non-consensual images of women they know, at scale, with essentially no friction––should already be enough. We do not need to reach for more.

Adjacent Reading

Cuevas et al. Measurement by Proxy: On the Accuracy of Online Marketplace Measurements.

Indicator. The Indicator Guide to Using Telegram in Digital Investigations.

Campobasso et al. You Can Tell a Cybercriminal by the Company they Keep: A Framework to Infer the Relevance of Underground Communities to the Threat Landscape

Nucleo. Telegram App Says It Prioritizes Child Safety. Its Bots Tell a Different Story

PS: Thanks to Miranda Wei for sharing the WIRED piece and AI Forensics report.